14 min read

14 min readEverything You Need to Know About Technical SEO

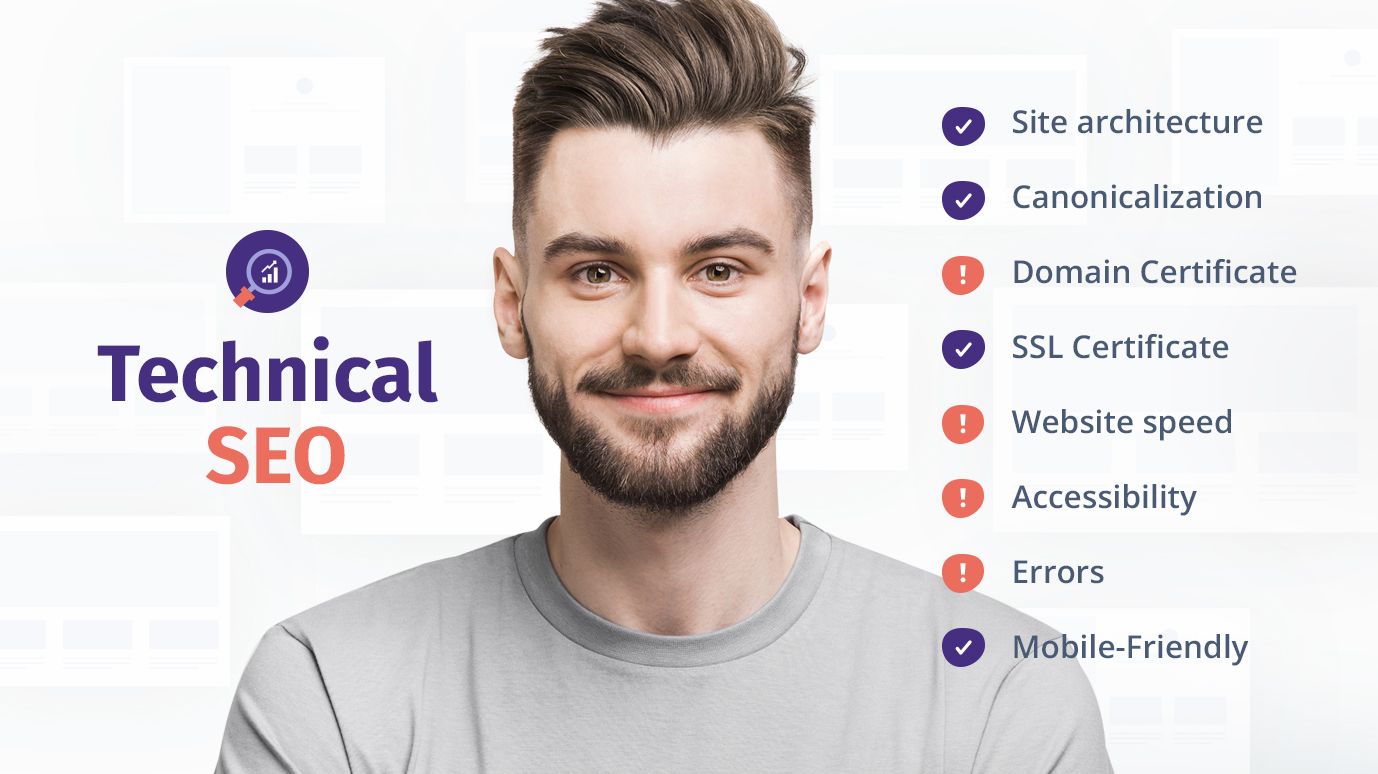

Technical SEO is what helps your business stand apart from the competition in search results. Search engines have some basic requirements for websites; those that don’t meet the requirements do poorly.

Performing well in search engine rankings is crucial for any modern business. New customers find many businesses through search engines, so your company won’t do well if your competitors’ sites rank higher than your site.

In this article, we’ll look at all the technical aspects of on-site SEO. From making pages load faster to structuring your site appropriately, you’ll learn everything you need to know to assess and improve your own site’s technical SEO. Improving this kind of SEO can have big effects on your site’s search engine performance.

It’s also important to note what we won’t look at. In particular, we’re not going to cover backlinks and off-site SEO or the importance of having quality content. While these topics are very important for ranking highly in search results, they’re outside the scope of this article.

First, let’s start by exploring what technical SEO is, why it matters, and a few of the most common components.

Concepts

In a nutshell, technical SEO is making the right technical changes to your website to improve its rankings in search engine results pages (SERPs). Search engines need to be able to crawl and understand your website to rank it. No matter how good your content is, your website will not perform well on Google or other search engines without good technical, on-site SEO.

Search Engines: A Primer

To properly understand SEO, you have to understand how search engines work at a basic level. Fundamentally, they work in three phases:

- First, the search engine follows a link to your website. Whether this comes from another site or simply one of your pages, this link provides an entry point for the Googlebot (or Bingbot) to start looking at your site.

- Next, it crawls the content, looking for keywords, content, and bits of information that might show up in search queries. The search engine renders your entire page just like a browser and traverses the content from there.

- Finally, it adds your pages to its indices and determines rankings for different search queries using information in the index.

Technical SEO can make a big difference at all of those stages. Technical SEO mistakes can prevent search engine bots from accessing your website in the first place. Other errors can make its job harder when crawling your content. Any issues that the search engine finds will be reflected in your site’s rankings.

With technical SEO, lots of small differences will make or break your website’s rankings. That’s why it’s worthwhile to spend time working on this important aspect of search engine optimization.

Structure is Everything

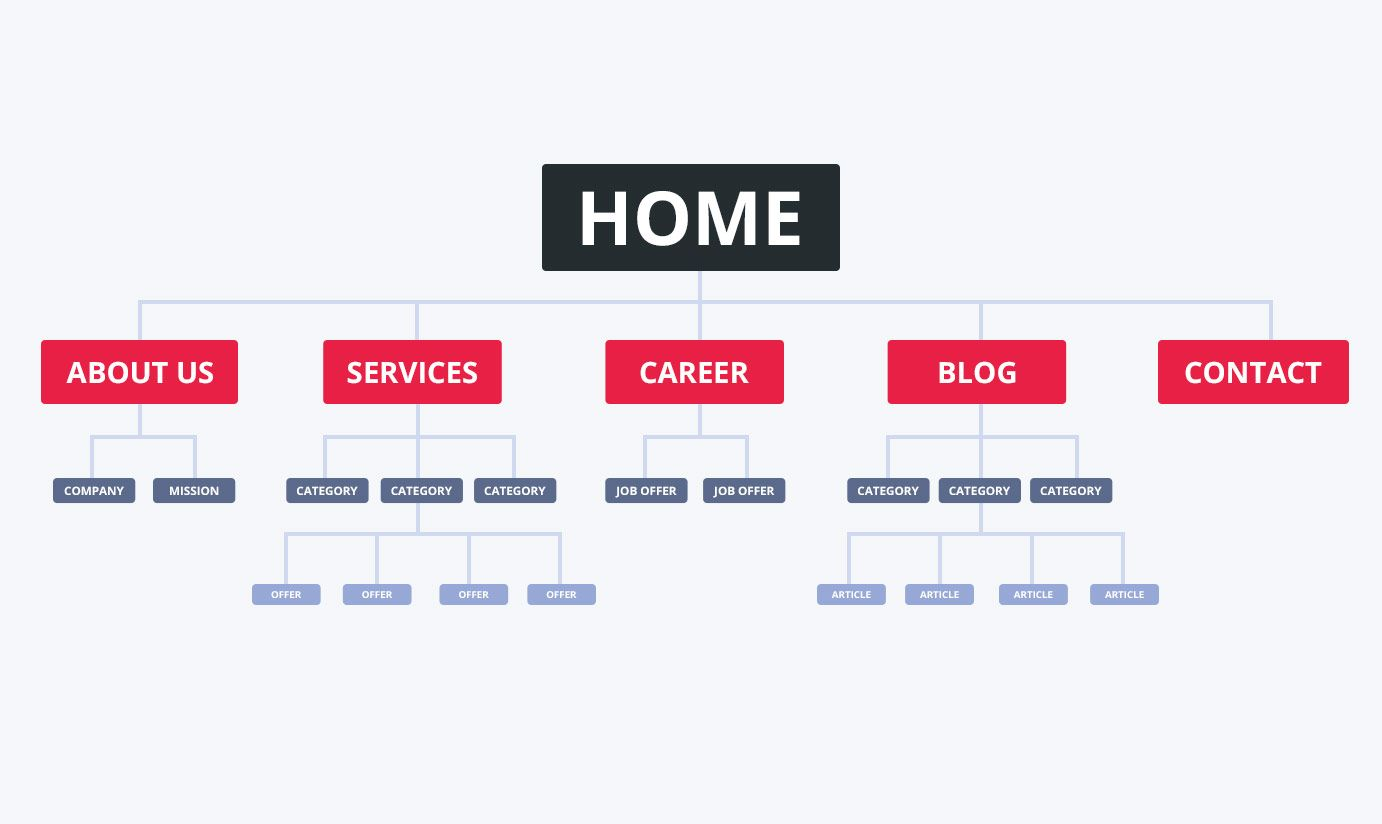

A large part of what makes websites easy for search engines to understand is structure. Badly-structures websites are hard to index and crawl. Even human visitors find it difficult to wrap their heads around a poorly-designed website structure.

In general, try to avoid making a super deeply-nested structure. Any given piece of content shouldn’t be more than a few clicks away from your homepage. If some content is difficult to reach, search engines might not index it. Even if a complicated structure makes sense in your head, it probably won’t make sense to Google—or even another person trying to browse your site.

Visualize your website as a sort of tree structure. If it’s flat, search engines will be able to crawl it with ease. If it’s a tangled mess filled with orphan pages (pages that cannot be reached via internal links), expect to have all kinds of trouble later on.

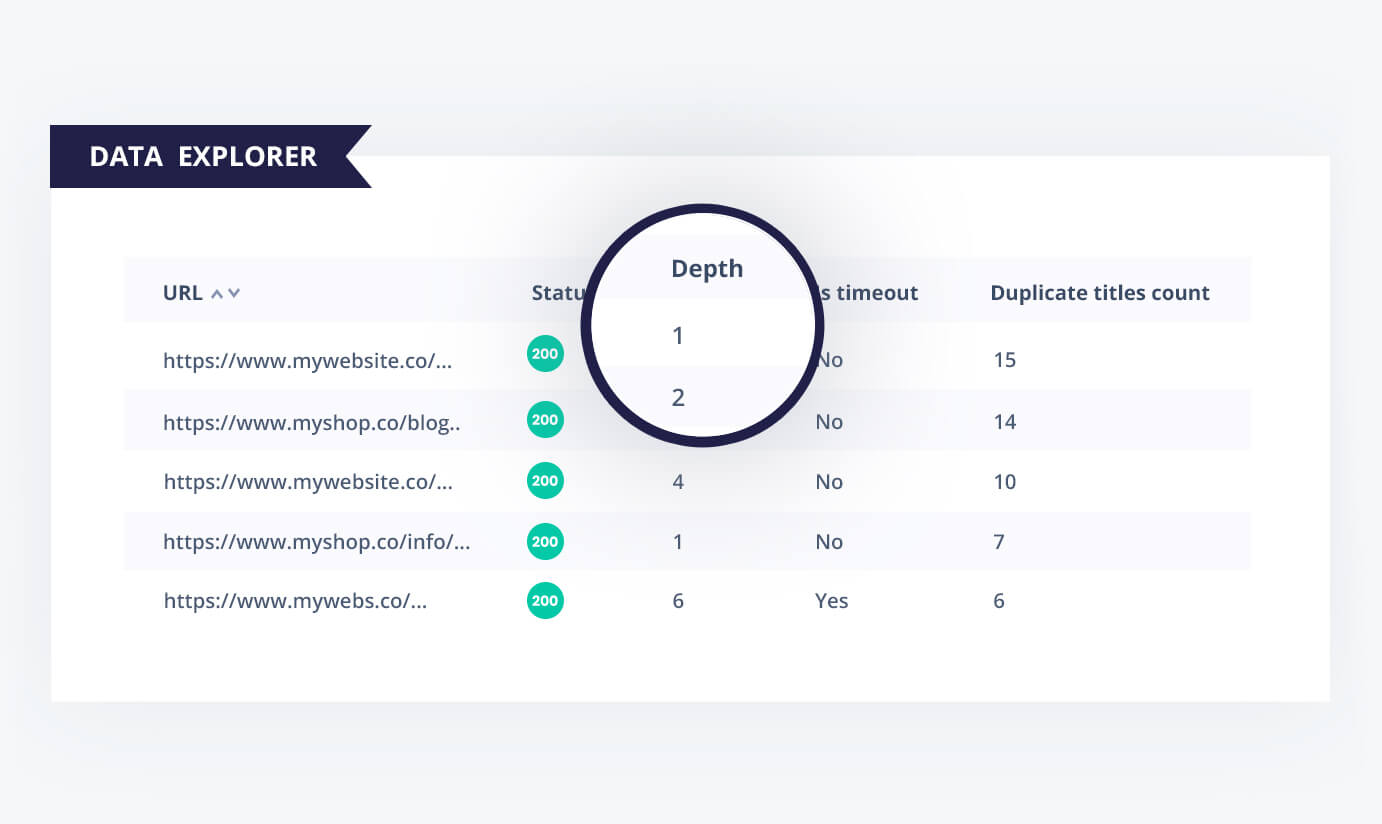

Seodity’s Data Explorer tool includes a useful Depth factor, which can give you a good idea of how deeply-nested your structure is. If you’re seeing a structure that deviates far from the perfect “flat” design, consider improving your structure now.

Another cool tool in this space is Visual Site Mapper, which crawls your site and shows you a huge web of how your pages connect to one another.

URL Structure

Although they’re frequently conflated, website structure and URL structure aren’t quite the same. Your website structure is determined more by the links between parts of the site than by what’s in the URL bar. A page with a super short URL might still be deeply nested.

Although overall site structure matters more than URL structure, designing your URLs to be human- and machine-readable matters too. Your URLs should mirror your site structure as much as possible so that your visitors understand where a page is on your site. They shouldn’t be long and unreadable if at all possible.

Unless you’re building the next Google Maps or another similarly advanced web app, don’t include long strings of data in URLs. Short text (one or two words between each path separator slash) is easier to read and copy.

Google also uses URL structure—in addition to other factors—to help determine the relationship between pages on your site. Be sure that your URLs make sense and are consistent throughout your website both for your human visitors and for search engines.

A Note About Sitemaps

Although we’ll cover sitemaps in more detail later on, it’s worth mentioning a few details before then. Sitemaps are machine-readable XML files that formally define how your pages relate to one another.

Even though Google and other search engines make heavy use of sitemaps when figuring out how to index your content, they are not a replacement for good site structure. If you can’t get to every page within a few clicks without the help of a sitemap, Google can’t either.

Navigation Tips

Site structure and navigation are intrinsically linked. Your navigation should reflect the actual structure of your site. In theory, your website itself, navigation, and sitemap should all show the same flat, easy to navigate structure. Flat site structure also makes navigation easier; heavily-nested pages are hard to put in menus, especially when dealing with mobile devices.

Breadcrumbs are one popular way to create internal links that define your website’s structure. Google even uses breadcrumbs-style navigation in place of the traditional URL on top of each search result nowadays. If your site structure involves categories and internal pages that don’t normally get linked externally, breadcrumbs can help search engines get a better idea of how your site is structured.

Finding Crawling and Indexing Errors

By now, you’ve improved your site’s structure in a variety of ways. It should be more easily comprehensible for both humans and machines.

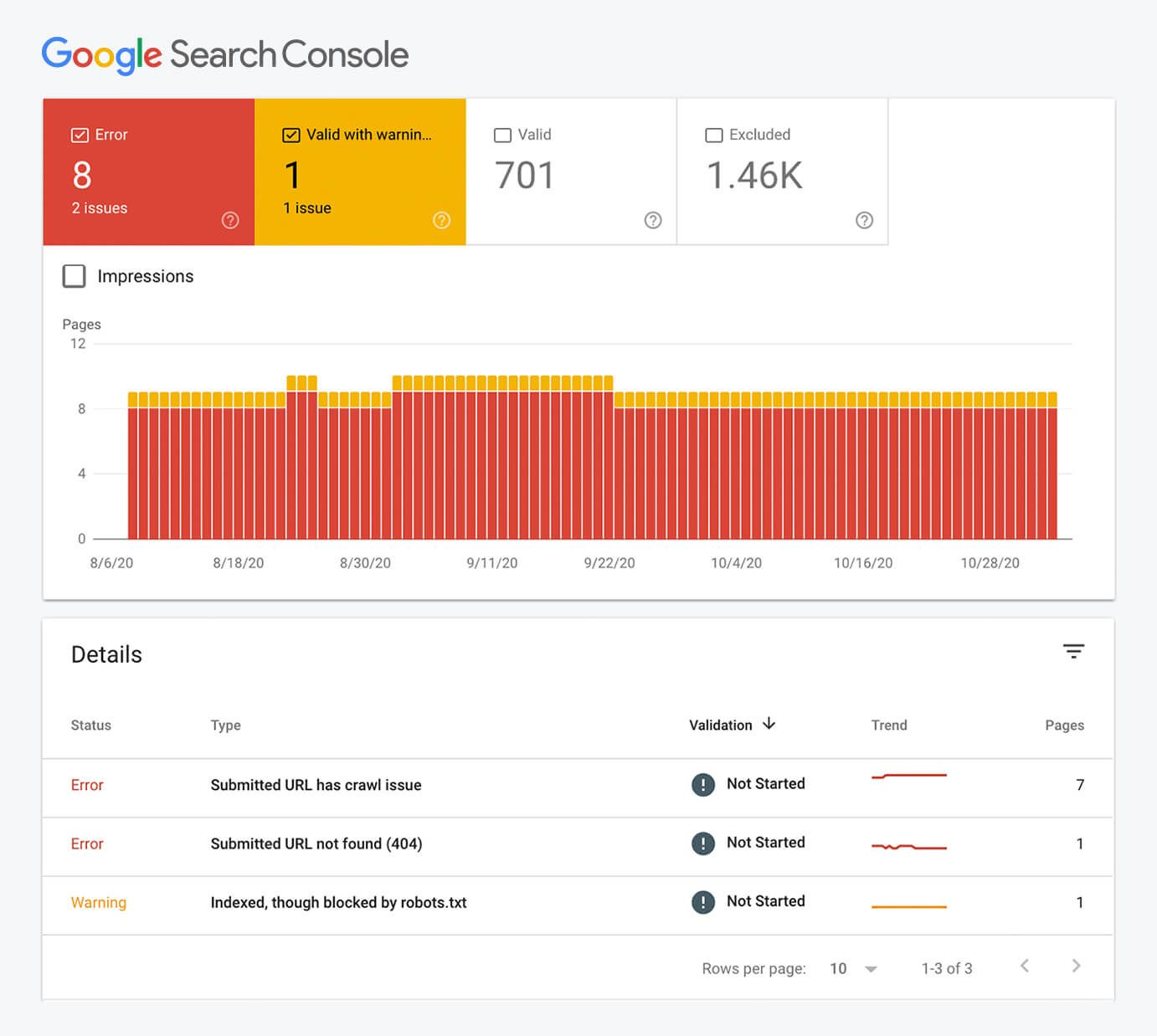

The next step is to see why Google and other search engines are having trouble visiting, crawling, and indexing your webpage. As mentioned in the Concepts section up above, errors in each of these three areas can be detrimental to your rankings.

Here are a few of the most common issues with crawling and indexing on search engines:

- Pages deep within your website aren’t being indexed or don’t show up in search results. This can be largely alleviated by flattening your website structure, but sometimes the issue persists.

- The index for your website is out of date. Search engine spiders aren’t hitting your pages often enough.

- You’re inadvertently blocking Google and other search engines from accessing your website in the first place.

To start solving these problems, spend some time in the Google Search Console. In particular, look for the Coverage page. This section will give you lots of information about each of your pages that aren’t showing up in search results for some reason or another. It will also show you warnings, which aren’t fatal, but can be fixed for better rankings.

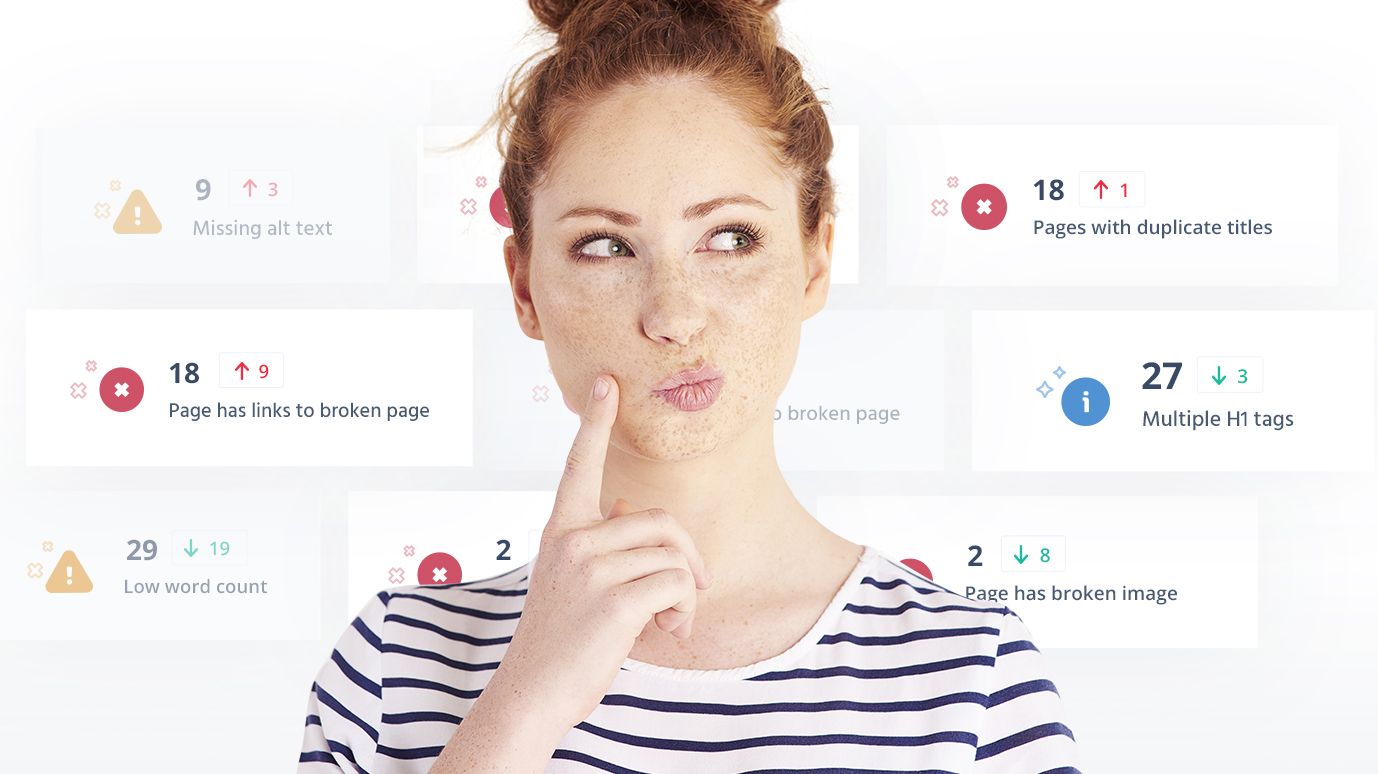

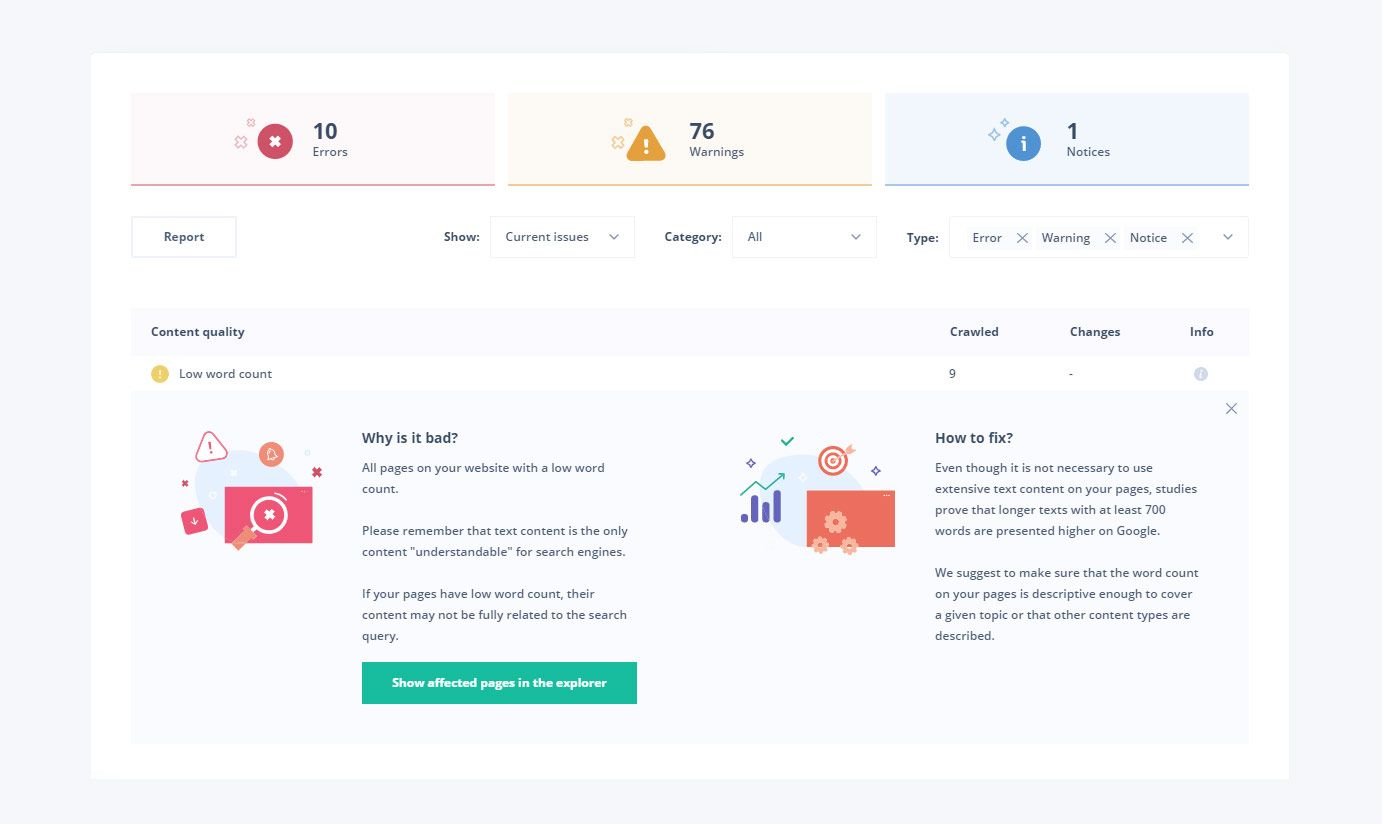

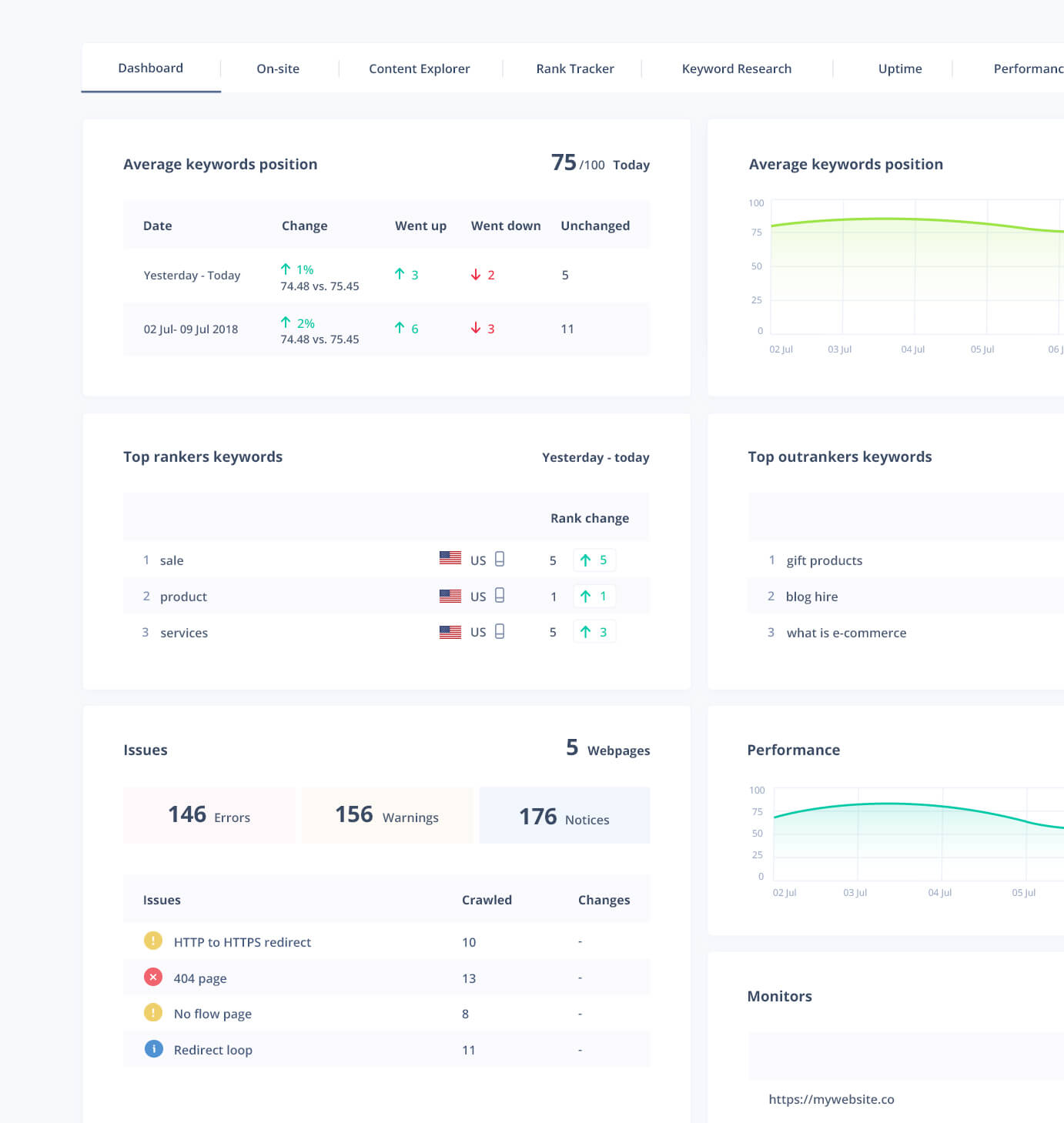

After looking through Google’s offering, fire up Seodity’s on-site audit feature. It will give you even more detailed data about each of your pages, helping you to fix errors that affect crawling and indexing.

Take Advantage of XML Sitemaps

XML sitemaps aren’t a replacement for good site structure, but they’re absolutely not useless. Google still makes heavy use of sitemaps to discover and index new URLs.

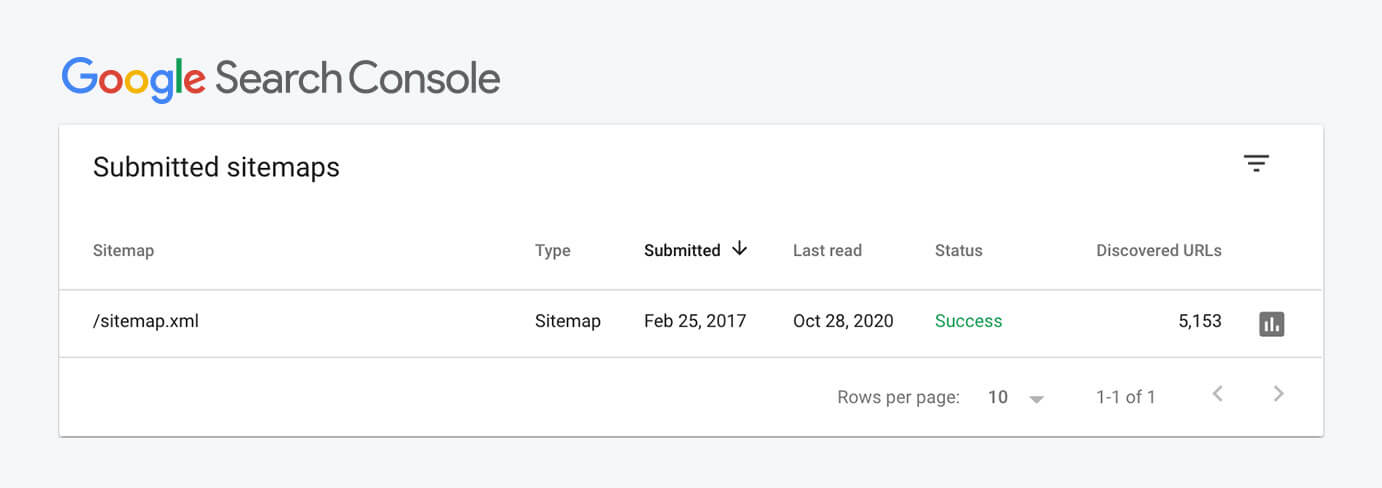

In the Google Search Console, you can check to see that your sitemaps are working properly. Underneath Coverage in the menu, you’ll see Sitemaps. In this section, make sure that your XML sitemaps appear on your site and that they show all of the pages indexed by Google. If something is missing or the sitemap cannot be read appropriately, try using a sitemap validator tool to see if the issue is with your XML file.

Speed and Mobile

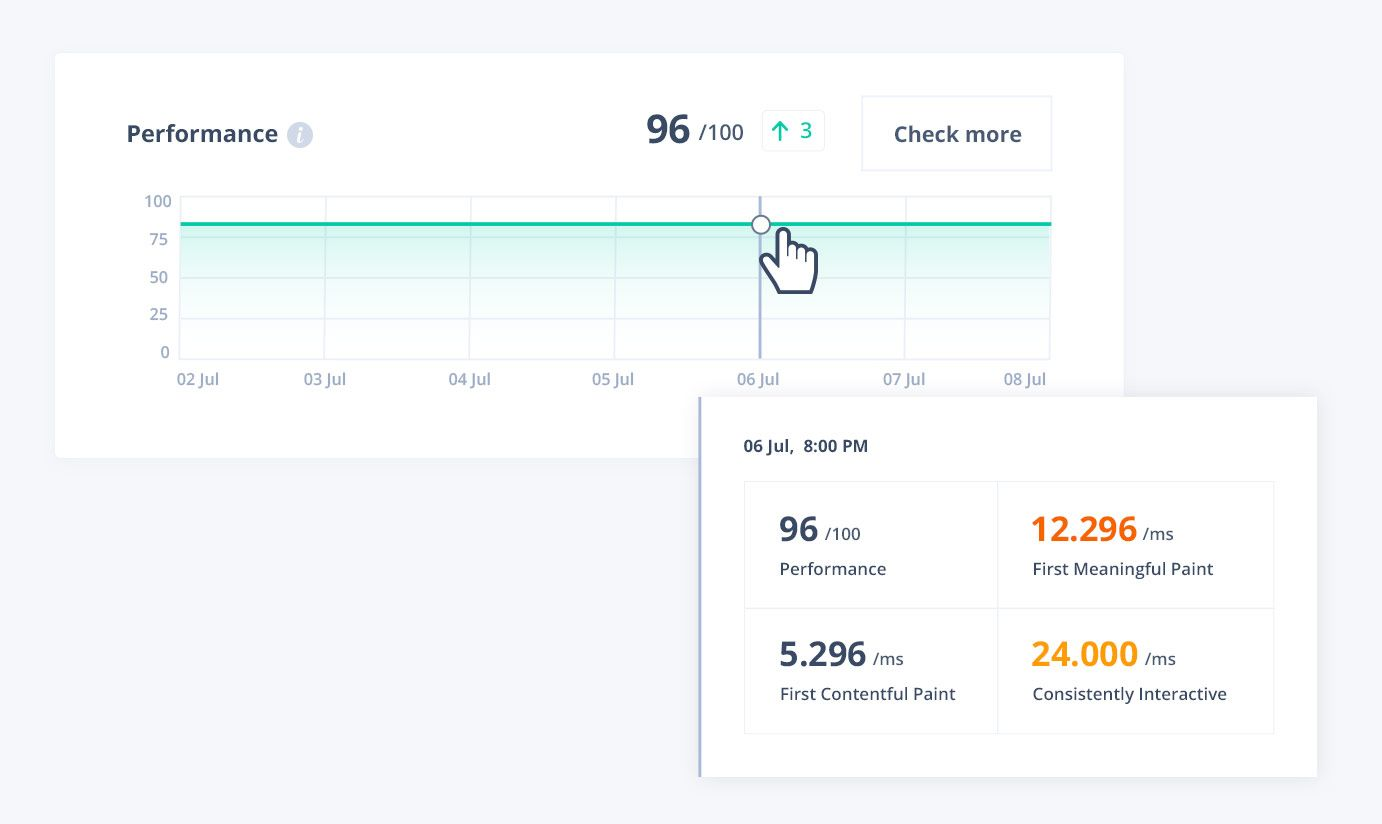

Whether it’s improving SEO, decreasing bounce rates, or even saving the planet, a faster website is a better website. Although caching, lazy loading, offloading images, and other strategies can make a bloated website faster, these quick fixes aren’t a complete solution.

One factor is more important than all the others: page size. Generally, a website is fast when its total transfer size is minimized. Sometimes, improving your website’s transfer size requires making some compromises. You might need to remove extra external scripts, minimize the number of web fonts you use, or even get rid of some images.

A one-second load time on a desktop computer connected to a fast internet connection is much longer than one second on an older smartphone with 3G. Given that most of today’s web browsing happens on mobile devices, it’s especially important to optimize for this use case.

Speaking of Mobile…

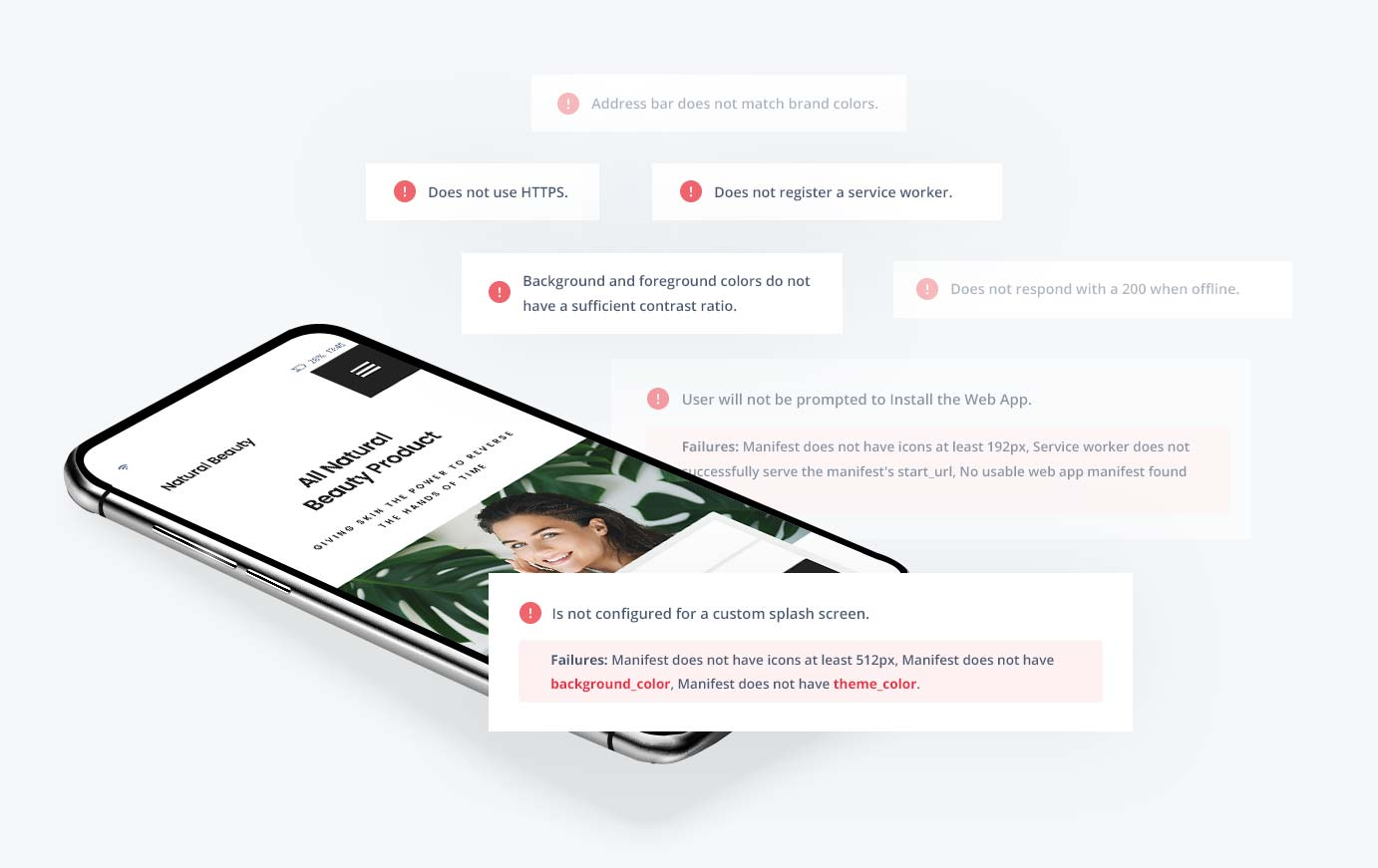

You should optimize your company’s website for mobile devices in other ways, too. In addition to making your website fast on a slow smartphone over a slow connection, you should also make sure that every single page is easy to use on a mobile device.

Here are a few of the most common issues with responsive sites:

- Images or long lines should not go off the ends. Even if a template or page design works responsively the vast majority of the time, sometimes a single page will contain some kind of element that goes off the right side. This negatively affects user experience on mobile devices.

- Buttons, scrolling areas, and other interactive elements must be accessible from any device. Controls should not be so close together that they are unusable on a touchscreen. Similarly, everything should be designed with the assumption that hover-over effects aren’t possible. Sites with menus that only work with desktop mice still exist in 2020; don’t let your site be one of them.

- Text absolutely has to be readable. Too small text is usually no longer an issue, but sometimes it can still be a problem. One common culprit is text within images. Avoid this if you want to make sure that your images can be scaled, resized, and compressed to fit smartphone screens without losing readability.

- Scrolling should be smooth and use the device’s native scrollbar functionality. Beyond being a nuisance to users, scrolljacking can make it harder for search engine crawlers to understand the content on your website. Overriding scrolling functionality, especially on mobile devices, rarely makes sense.

Everything About Content

Most of the time, it’s your content that attracts visitors to your website. Search engines rank sites with genuinely useful, unique information above those that have little content or copy content from other sites.

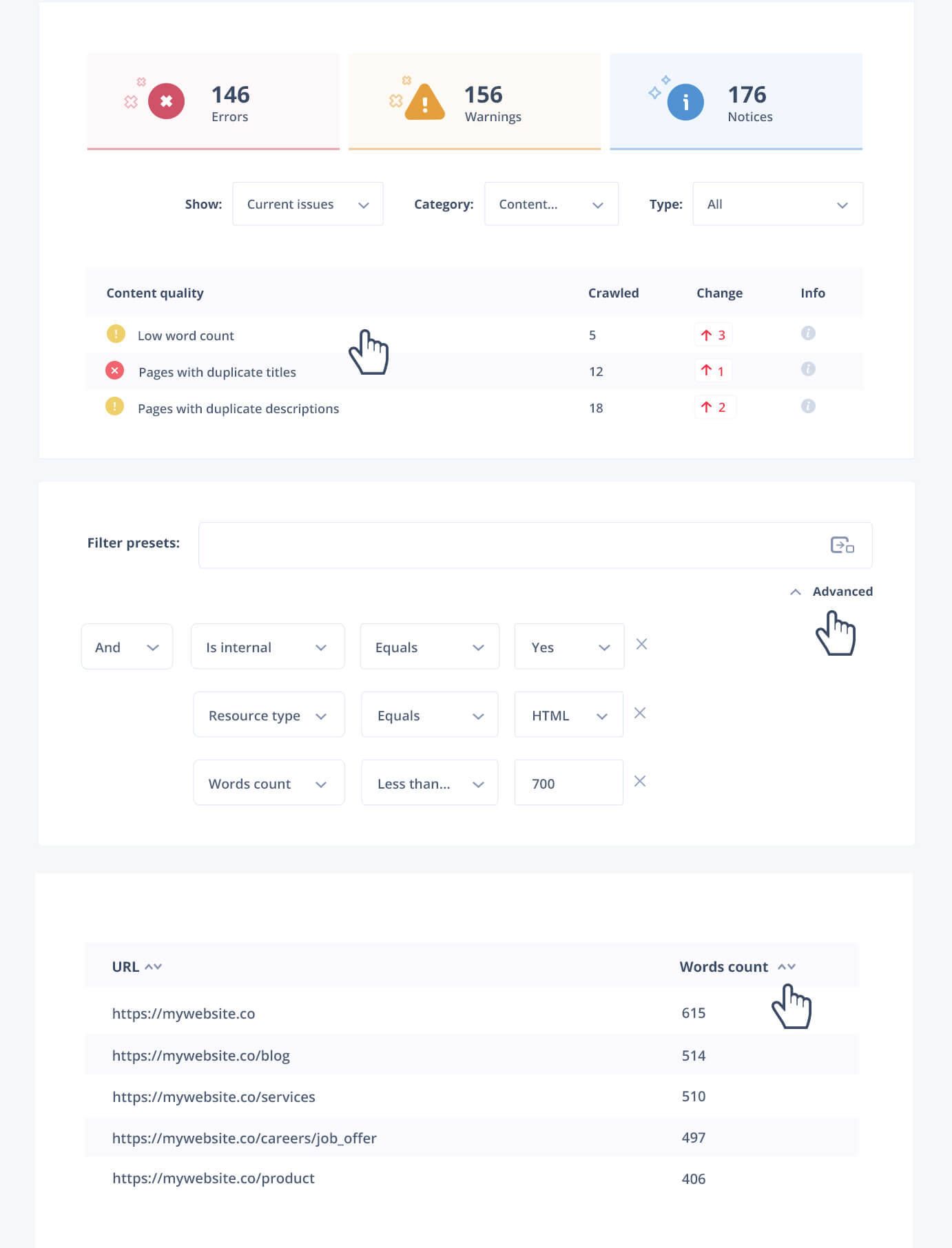

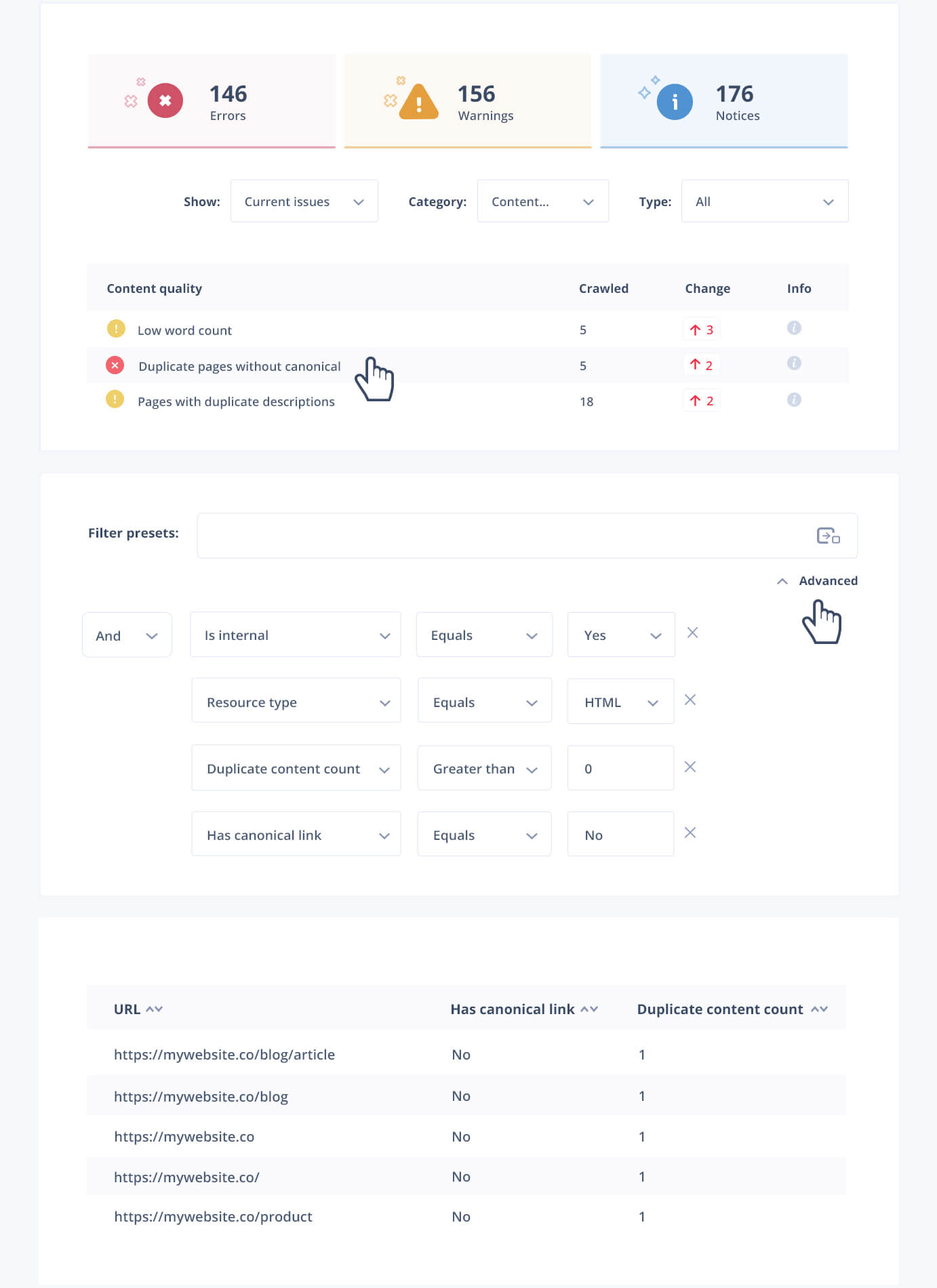

Seodity’s on-site SEO auditing tool can help you find duplicate content and pages with low word count. Once you’ve identified pages with duplicate content, remove them or add a meta tag that prevents search engines from indexing them. Either way, solving this issue will help your site perform better in search engine rankings without much extra effort.

Canonical URLs are another good solution to the duplicate content problem. In lots of cases, you’ll have a few different versions of a page with slightly different content. For example, you might be running an ecommerce site with lots of variations of products or a paginated forum app with some duplicate content.

When this happens, you can add the canonical content tag to tell search engines that another version of the page is the “original”. Search engines will be aware of the non-canonical versions, but they won’t punish you for duplicate content like they would without this change.

Some Nitty-Gritty Details

Small changes can have big effects on how highly your site ranks in search engine results pages. In an effort to improve the web’s security and performance, Google gives small boosts to sites that follow modern practices. Plus, other small changes are just a good idea in general—like making it easy for search engines to find internationalized content.

In this section, we’ll look at some small, generally non-obvious changes that can make a big difference. Many of these issues can be detected automatically with technical SEO auditing tools like Seodity. Try using one of these tools on your site at the same time as you comb through it by hand, fixing any issues that the tool identifies.

HTTPS

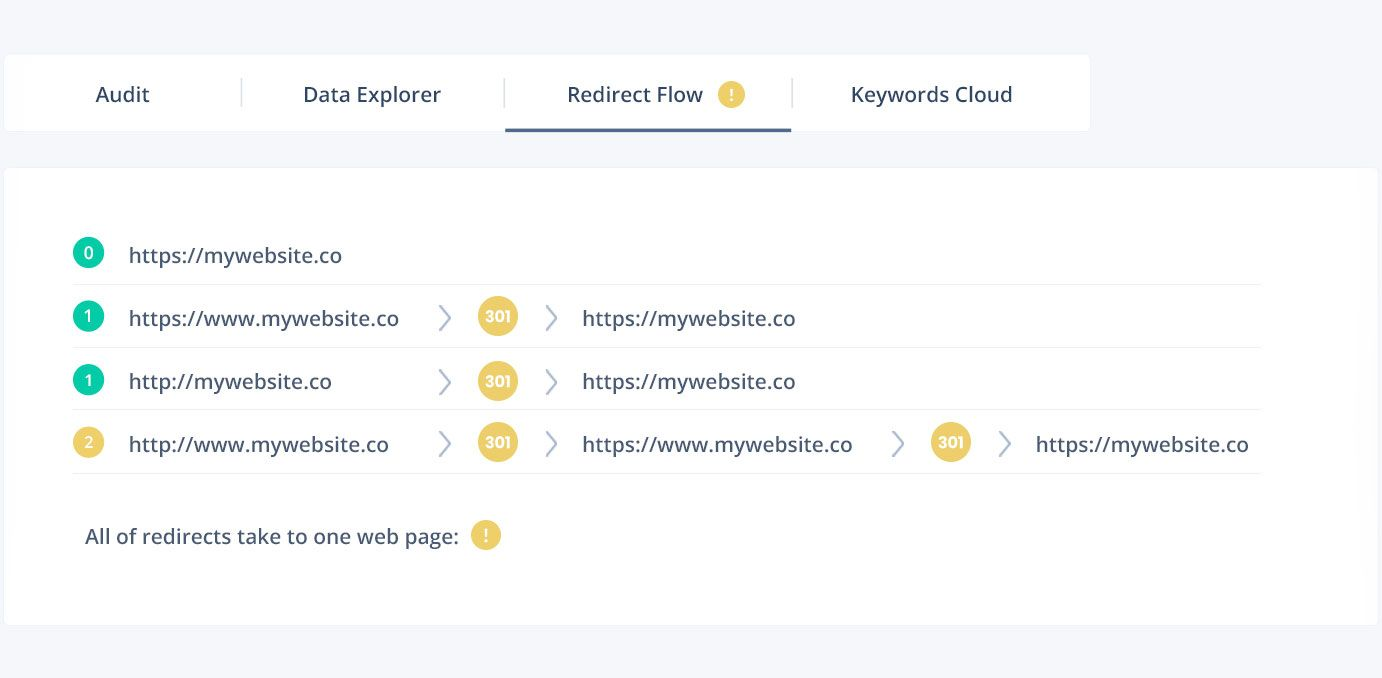

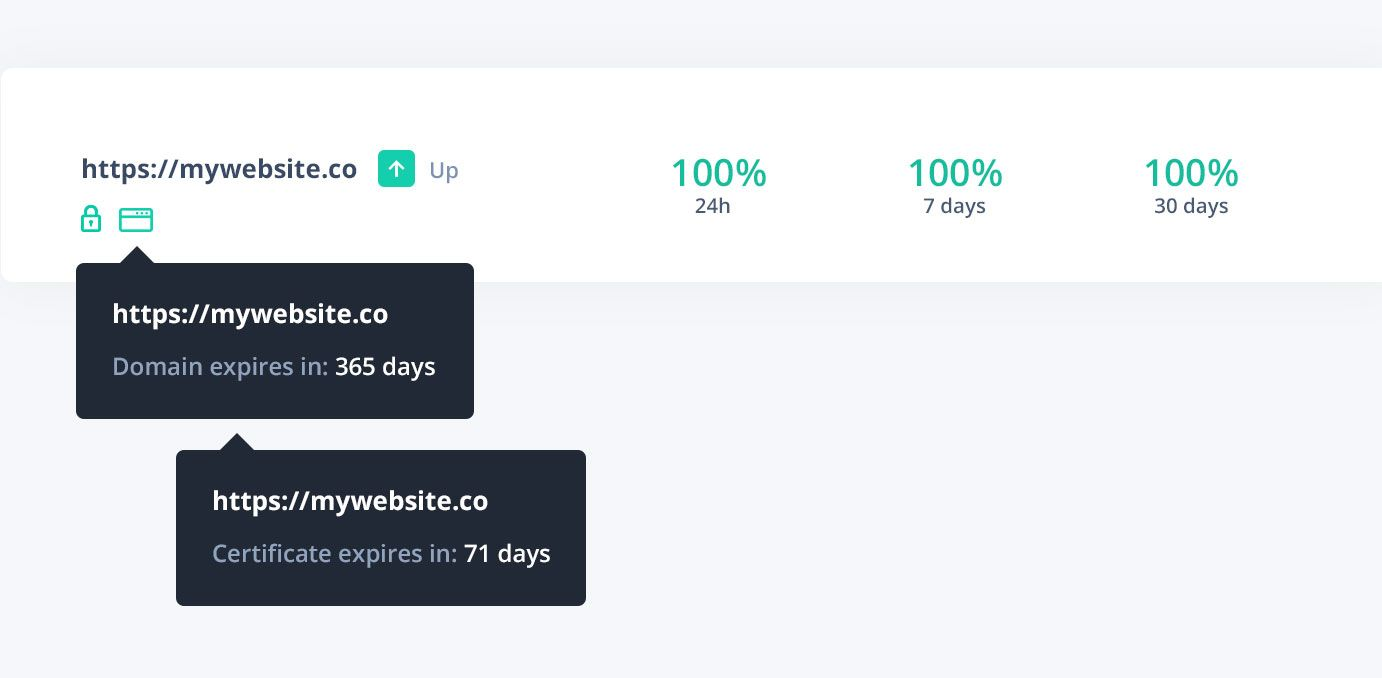

To help encourage website owners to make their websites more secure, Google gives a slight boost to sites that use HTTPS instead of HTTP. This is a simple technical change that you should have made already to keep your visitors secure.

Implementing this change will depend on your web host and other factors. If you have a complicated website that can’t be easily migrated to HTTPS, it might be easier to put Cloudflare or another proxying CDN solution in front of your website. This way, your users will benefit from HTTPS and faster load times without you having to do anything.

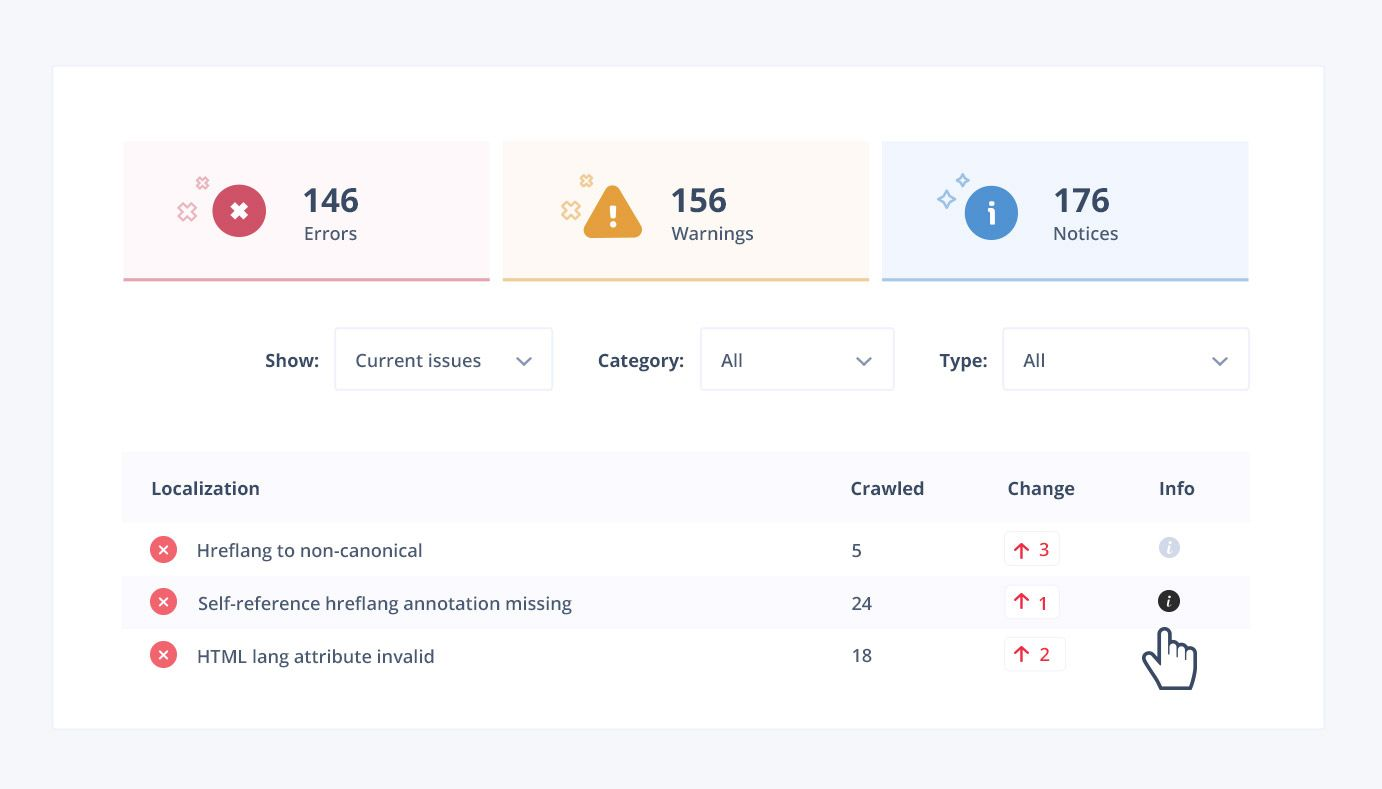

Hreflang

To appeal to visitors from various parts of the world, your website and content should be internationalized. Most importantly, this means translating content into a variety of languages. Search engines should be made aware of these translations so that they can drive traffic to the right version of your pages.

There’s a standard way of doing this on the web called hreflang. It’s a special variation of the <link> tag that lets you point two alternate pages to one another. It works with different top-level domains (like .com vs .mx) as well as URL schemes like /en/ and /es/.

Structured Data and Schemas

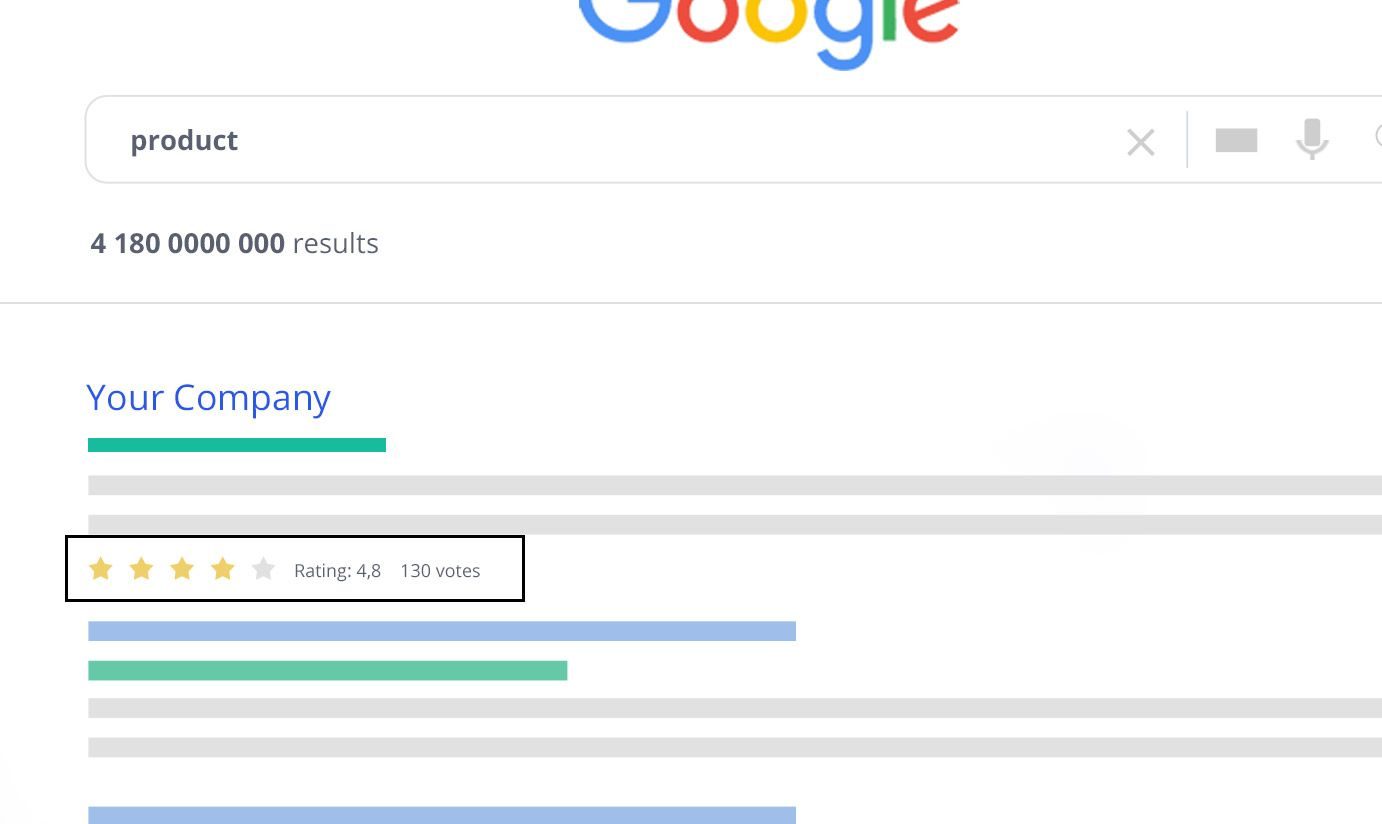

You might have noticed various kinds of structured data boxes throughout Google SERPs. These boxes generally source information from structured data present on websites. If your site is featured prominently as the answer to a common search query, you’re likely to receive a lot more hits than you would normally.

To be able to provide the kind of information used in Rich Snippets and other areas, try including schema markup. This is special HTML code that doesn’t directly appear for your visitors; instead, it can be optionally read by search engines and machines to display data from your site in other places.

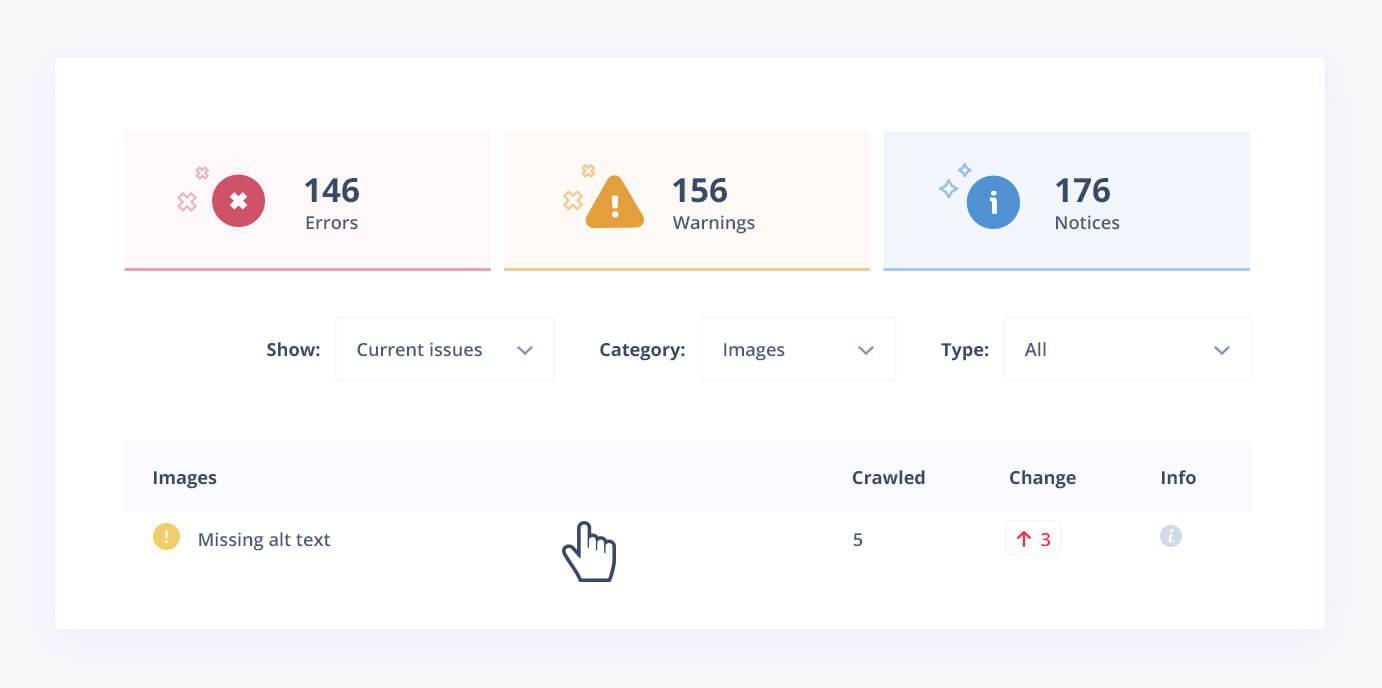

Accessibility: Image Alt Text and More

Even though making your website accessible to people with disabilities is required by law in many jurisdictions, lots of companies still neglect this important consideration.

Google and other search engines give websites with good accessibility better prominence in organic search rankings. Alt text in images to help screen readers interpret pictures is one of the simplest and most effective ways to help both disabled people and SEO.

Freshness

Stale content doesn’t do your web rankings any favors. Some website owners try to game this by updating the titles of their content pieces with the current year (think “Top 10 Smartphones for 2020”) without changing the content significantly. Don’t do this. Google and other search engines are smart enough to know what actually fresh content looks like.

When you update content, look to change the following things:

- “Updated” date, visible next to the “published” date on your website.

- Actual content on the page, even if it’s just a few sentences.

- The title if a date is mentioned.

- The last modified HTTP header or meta tags.

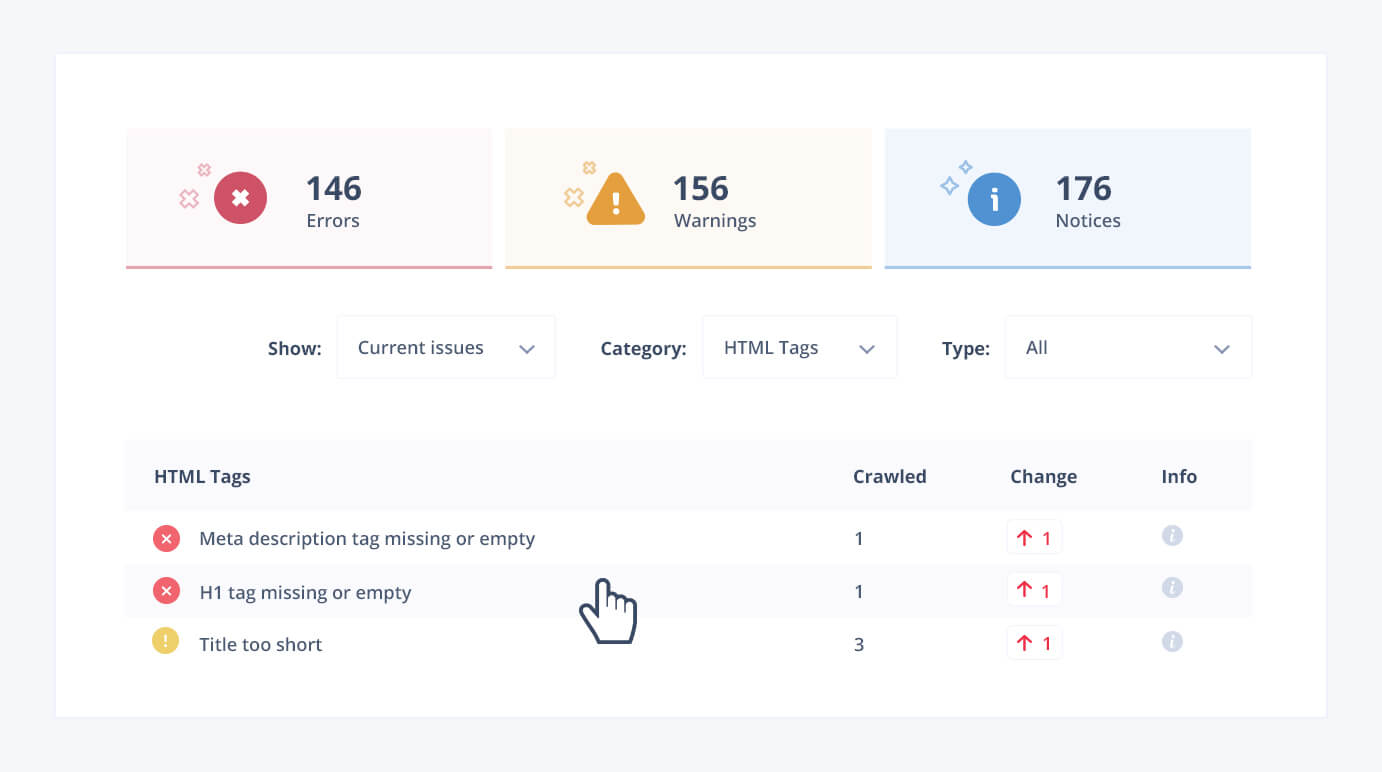

Robots.txt and Meta Tags

Although these issues are some of the simplest SEO problems to solve, they still plague many website owners. The robots.txt file is intended to prevent unwanted spiders from indexing your website, but sometimes this feature is misused or accidentally enabled, preventing Google from accessing your website at all.

Another similar issue is poorly-used meta and nofollow tags. These tags can block search engines from accessing a particular page or following a link onto another page. While they’re useful in many situations, these features can be a challenge when they interfere with search engine indexing.

Analyze and Improve Your Site’s Technical SEO with Seodity

Many of these technical SEO issues are easiest to detect with the right automated tools. Seodity offers powerful on-demand SEO tools, including auditing for on-site technical SEO. You can identify content issues, solve problems with your meta tags, analyze your competitors, and even monitor your site’s performance all from one easy, centralized dashboard.

In addition to using Seodity, try using Google Search Console and actual search engines to test your site’s performance. Seodity has access to lots of data, but Search Console has the most up-to-the-minute information on what Google has crawled recently. You can combine Seodity’s strategic information and suggestions with Google’s specific data to solve even the hairiest of SEO problems.

Marcin is co-founder of Seodity

.svg)